Scenario Testing is carried out by exaggeration or mimic of real time scenarios. It requires clear co-ordination with Business users. Business users can help define plausible scenarios and workflows that can mimic end user behavior. Real-life domain knowledge is critical to creating accurate scenarios. We would test the system from end to end perspective but not necessarily as a black box.We use the technique, “soap opera testing,”. The idea here is to take a scenario that is based on real life, exaggerate it in a manner like the way TV soap operas exaggerate behavior and emotions and compress it into a quick sequence of events. We raise questions like “What’s the worst thing that can happen, and how did it happen?”

When testing different scenarios, we ensure both the data and the flow are realistic. Find out if the data comes from another system or if it’s input manually. We get a sample if we can by asking the customers to provide data for testing. Real data will flow through the system and can be checked along the way. In large systems, it will behave differently depending on what decisions are made.When testing end-to-end, we make spot checks to make sure the data, status flags, calculations, and so on are behaving as expected. We use flow diagrams and other visual aids to help us understand the functionality and carry out the Testing accordingly.

Exploratory testing (ET) is a sophisticated, thoughtful approach to testing without a script, and it enables to go beyond the obvious variations that have already been tested. Exploratory testing combines learning, test design, and test execution into one test approach. We apply heuristics and techniques in a disciplined way so that the “doing” reveals more implications that just thinking about a problem. As we test, we learn more about the system under test and can use that information to help us design new tests.

Exploratory testing is not a means of evaluating the software through exhaustive testing. It is meant to add another dimension to testing. Exploratory testing uses the tester’s understanding of the system, along with critical thinking, to define focused, experimental “tests” which can be run in short time frames and then fed back into the test planning process.

Exploratory testing is based on:

Risk (analysis): The critical things the customer/End user think can go wrong or be potential problems that will make people unhappy.

Models (mental or otherwise) of how software should behave: We and/or the customer have a great expectation about what the newly produced function should do or look like, so we test that.

Past experience: Think about how similar systems have failed (or succeeded) in predictable patterns that can be refined into a test, and explore it.

What your development team is telling us: Talk to developers

and find out what “is important to us.”

Most importantly: What we learn (see and observe) as we test.As we learn during testing, we quickly see tests based on such things as customer needs, common mistakes the team seems to be making, or good/bad characteristics of the product.

Several components are typically needed for useful exploratory testing:

Test Design:We understand the many test methods. We call different methods into play on the fly during the exploration. This agility is a big advantage of exploratory testing over automated (scripted) procedures, where things must be thought out in advance.

Careful Observation:Our Exploratory testers are good observers. They

watch for the unusual and unexpected and are careful about assumptions of correctness. They might observe subtle software characteristics or patterns that drive them to change the test in real time.

Critical Thinking: The ability to think openly and with agility is a key reason to have thinking humans doing non automated exploratory testing. Exploratory testers can review and redirect a test into unexpected directions on the fly. We explain their logic of looking for defects and to provide clear status on testing. Critical thinking is a learned human skill.

Diverse Ideas: Experienced testers and subject matter experts can produce more and better ideas. Our Exploratory testers can build on this diversity during testing. One of the key reasons for exploratory tests is to use critical thinking to drive the tests in unexpected directions and find errors.

Rich Resources: Exploratory testers develop a large set of tools, techniques, test data, friends, and information sources upon which they draw.

Session-based testing combines accountability and exploratory testing. It gives a framework to a tester’s exploratory testing experience so that they can report results in a consistent way. In session-based testing, we create a mission or a charter and then time-box our session so we can focus on what’s important. Too often as testers, we can go off track and end up chasing a bug that might or might not be important to what we are currently testing. Sessions are divided into three kinds of tasks: test design and execution, bug investigation and reporting, and session setup. We measure the time we spend on setup versus actual test execution so that we know where we spend the most time. We can capture results in a consistent manner so that we can report back to the team.

1-Easy Access to Information

2-Easy Navigation to gather the information

3-Information is presented in an efficient way

4-Look and feel is good Enables easy categorization of Information

5-Enables to carry out Transaction or functionality in easy and short steps

6-Addresses all levels and types of users – Novice, intermediate and expert

User-friendly

In an application or product, we must be concerned with qualities such as security, maintainability, interoperability, compatibility, reliability and installability. We call us Ilities and the Testing carried to validate those ilities as ILITY Testing.

OK, it doesn’t end in -ility, but we include it in the “ility” bucket because we use technology-facing tests to appraise the QA aspects of the product. QA is a top priority for every organization these days. Every organization needs to ensure the confidentiality and integrity of their software. They want to verify concepts such as no repudiation, a guarantee that the message has been sent by the party that claims to have sent it and received by the party that claims to have received it. The application needs to perform the correct authentication, confirming each user’s identity, and authorization, in order to allow the user access only to the services they’re authorized to use. We have a separate Testing Competency Center to carry out QA Testing.

We encourage development teams to develop standards and guidelines that they follow for application code, the test frameworks, and the tests themselves. Teams that develop their own standards, rather than having them set by some other independent team, will be more likely to follow them because

they make sense to them.

The kinds of standards we mean include naming conventions for method names or test names. All guidelines should be simple to follow and make maintainability easier

Standards for developing the GUI also make the application more testable and maintainable, because testers know what to expect and don’t need to wonder whether a behavior is right or wrong. It also adds to testability if you are automating tests from the GUI.

Simple standards such as, “Use names for all GUI objects rather than defaulting to the computer assigned identifier” or “You cannot have two fields with the same name on a page” help the team achieve a level where the code is maintainable, as are the automated tests that provide coverage for it.

Maintainability is an important factor for automated tests as well. Database maintainability is also important. The database design needs to be flexible and usable. Every iteration might bring tasks to add or remove tables, columns, constraints, or triggers, or to do data conversion. These tasks become a bottleneck if the database design is poor or the database is cluttered with invalid data.

Interoperability refers to the capability of diverse systems and organizations to work together and share information. Interoperability testing looks at end-to-end functionality between two or more communicating systems. These tests are done in the context of the user—human or a software application — and look at functional behavior.

Reliability of software can be referred to as the ability of a system to perform and maintain its functions in routine circumstances as well as unexpected circumstances. The system also must perform and maintain its functions with consistency and repeatability. Reliability analysis answers the question, “How long will it run before it breaks?” Some statistics used to measure reliability are:

Performance Testing is carried out to verify that the application’s response time is appropriate and not degraded with increase in the load for the current and the forecasted requirements. Performance Testing monitors the performance of the various components of the applications on the Client and Server by simulating the desired load using virtual users. They are different tools used to generate the Virtual users in certain pattern and tools to monitor the Client and Server Components of the Application. Performance is critical across all levels of business as stated below.

> Faster response times

> Support for increasing number of users

> Stability on wide range of workload patternsError-free executions

> CPU and memory usage below pre-configured levels

> Improved disk read /write activity

> Optimum network utilization

> Performance compliance

> Architecture validations

> Software / hardware bench marking

> Capacity planning

> Higher ROI

> Lower running costs

> Better customer satisfaction by catering to increasing business demand

The different types of Performance Testing is carried to meet certain performance objectives and they are

> Load Test – To determine the scalability of the application under real world scenarios

> Stress Test – To determine the breaking point of the server

> Volume Test – To test the performance of the application at high volumes of data

> Endurance Test/ Soak Test -To determine the stability of the application by executing the load test for an extended period of time

> Spike Test -To determine whether the application sustains sudden increase in load during abnormal conditions

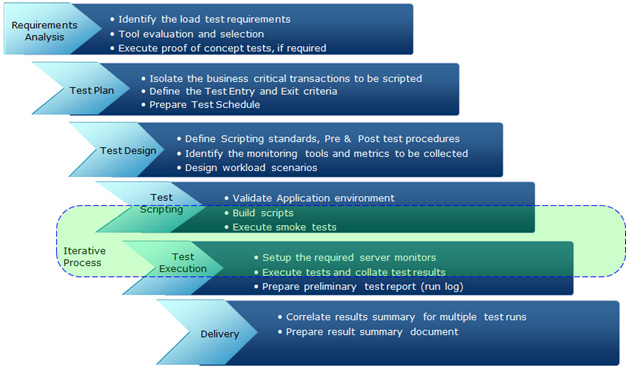

Shriya Consultancy Services follows a standard and proven Performance Testing Methodology. Performance Testing Methodology defines 5 different phases namely Requirements Analysis Phase, Test Plan phase, Test Design phase, Test Scripting Phase, Test Execution phase and Delivery. The below diagram clearly depicts the key activities that are carried out across the phases.

We have a well-defined Questionnaire that would help our Client to document the Performance Testing requirement if the Performance Testing requirements are not Cleary defined in the Requirement documents.

> Tools – We have expertise on different Performance Testing and Monitoring tools but our focus is on Open Source tools like Jmeter to reduce the cost of Performance Testing. We help our Clients to migrate to Jmeter from Licensed tools like Load Runner or Performance Center. Some of the Tools we use are.

> Performance Testing – Apache JMeter, Load Runner, WebLoad, BlazeMeter

> Performance Monitoring Tools – Perfmon, Jconsole, Visual VM

JMeter, Webload, Load Runner, OpenSTA, NeoLoad, WAPT